Is Answer Engine Optimization (AEO) just a fancy word for traditional SEO?

If you ask most marketers, the answer is, Yes.

The prevailing theory is simple: LLMs like ChatGPT and Perplexity just scrape the top Google results, summarize them, and spit them out.

If this theory were true, the math would be predictable:

- Rank #1 on Google = Citation #1 on ChatGPT.

- Rank #17 (Page 2) on Google = Invisible on ChatGPT.

We wanted to test if this logic actually holds up in the wild. So, we stopped guessing and started logging.

At FlipAEO, we conducted a rigorous, manual stress-test of 100 specific search queries to map the exact relationship between “Google Position” and “AI Visibility.” We wanted to see evrything clearly, so without relying on any type of tool or automated script. We did check evrything one by one manually.

The results were not what we expected. In fact, they fundamentally disprove the idea that you need to be on Page 1 to win the AI citation.

The Setup: How We Tested It

To ensure this data was pure, we didn’t use automated API bulk-checks (which can sometimes hallucinate or serve cached data). We did this the hard way: Manual Verification.

- The Sample Size: 90-100 specific, high-intent queries within a single niche.

- The Engines:

- Google Search (Incognito Mode, localized).

- ChatGPT-5.2 (Web Search Mode enabled).

- The Methodology:

- We entered a query into Google and noted the Top 20 Organic Results.

- We entered the exact same query into ChatGPT.

- We analyzed the answer of ChatGPT and “Sources” (Citations) used in the AI response.

- We compared the overlap: Did the AI pick the Google winner, or did it pick someone else?

The Goal: To isolate whether AI is a “Mirror” (reflecting Google) or a “Judge” (making its own quality decisions).

Here is exactly what we found.

The Findings: Where Google and AI building their own road

We analyzed evrything manually (query google, query ChatGPT and note down evrything on paper, so that we can exactly match and believe after seeing with our eyes) looking for patterns of Convergence (where Google and AI agreed on the winner) and Divergence (where they disagreed).

While there was certainly overlap for major authority sites, the data quickly revealed that the “AI follows Google” rule is not a rule at all. It is barely a suggestion.

Finding #1: The “Page 2” Anomaly (Deep Retrieval)

The most surprising discovery of this test was the sheer depth of the AI’s retrieval process.

The Common Myth:

“You need to rank in the Top 3 (or at least on Page 1) of Google to be seen by ChatGPT.”

The Reality:

In approximately 40% of our test cases, the Primary Citation (the first three source linked in the AI answer) did not come from Google’s first page.

We found multiple instances where the AI skipped the entire top 10 results and cited a source ranking on Google Page 2 (Positions 11–20). Also, in approx 12-15 extreme outlier case, the primary citation was pulled from Page 3, 4 and 5.

Note: However, we couldn’t see a single source beyond Page 5 of google. (This is most important part.)

The “Position 17” Scenario

One specific data point illustrates this perfectly.

- We did a Long tail query about a process in the specific niche

- Google’s #1 Rank: it was an industry leader site (High Domain Authority).

- Google’s #17 Rank (Page 2): A small, niche-specific blog post from a brand that answered the query with a structured content on their page (it had topical authority in that niche.

The Outcome: ChatGPT completely ignored the Google #1 result. It dug down to Position 17, extracted the data from the table, and cited that Page 2 site as the absolute authority.

What This Means for You

This proves that LLMs (specifically ChatGPT with Search) do not just “scrape the top 3 results.” They are performing a “Deep Retrieval”.

The model appears to be fetching a much larger pool of candidates (likely the top 30–50 organic results) and then re-ranking them internally. It discards the “SEO Winners” (high backlinks, low density) and promotes the “Information Winners” (high density, clear structure) even if Google has buried them on Page 2, 3 or 4.

The Takeaway: You do not need to beat the giants on Domain Authority to win the citation. You just need to be indexed in the “Consideration Pool” (Top 30-50, based on what we saw in this small set of queires) and have the superior answer.

Finding #2: The Niche Authority Override (The Reddit Paradox)

If you have searched for anything on Google recently, you know the reality, Reddit is everywhere.

Google’s recent core updates have heavily prioritized “User Generated Content” (UGC) and big-brand publishers (like Forbes or major news outlets) for informational queries. Google’s algorithm seems to be prioritizing Engagement and Discussion.

But our test revealed that ChatGPT does not share this bias.

What we found?

In several test cases, we saw a distinct split:

- Google (#1 Rank): A Reddit thread or a linkedin post.

- ChatGPT (Primary Citation): A smaller, niche-specific website that demonstrated deep topical authority.

The Reddit Example:

For approx 3-4 specific queries, Google ranked a Reddit thread at Position #1.

ChatGPT, however, completely ignored the Reddit thread. But yes, it was in the list of cited sources at the bottom of ChatGPT sources list. So, it pulled its primary answer from a specialized brand that was ranking at Position #8.

Our Thesis: Why Is This Happening?

The AI is judging the content by a different standard: Information Density vs. Discussion.

- Reddit threads are often unstructured, filled with opinions, jokes, and conflicting advice(specially the attacks on each other & they barely reach a solid conclusion). This makes them “noisy” data for an LLM trying to construct a single, factual answer.

- Niche Authority Sites tends to present the answer in a structured format (definitions, steps, data).

The Insight:

While Google is currently chasing Brand Authority / Popularity (traffic signals), AI Search is chasing precision (semantic signals).

This is a critical finding for independent publishers and brands. You might feel “bullied” out of Google’s top spot by Reddit, but you are likely beating Reddit inside the LLM because you are providing the structured, expert data that a forum simply isn’t.

The Takeaway: Do not try to copy Reddit. Do not water down your content to be “chatty.” Double down on being the Topical Authority. The AI is looking for the “Expert Node,” not the “Popular Vote.”

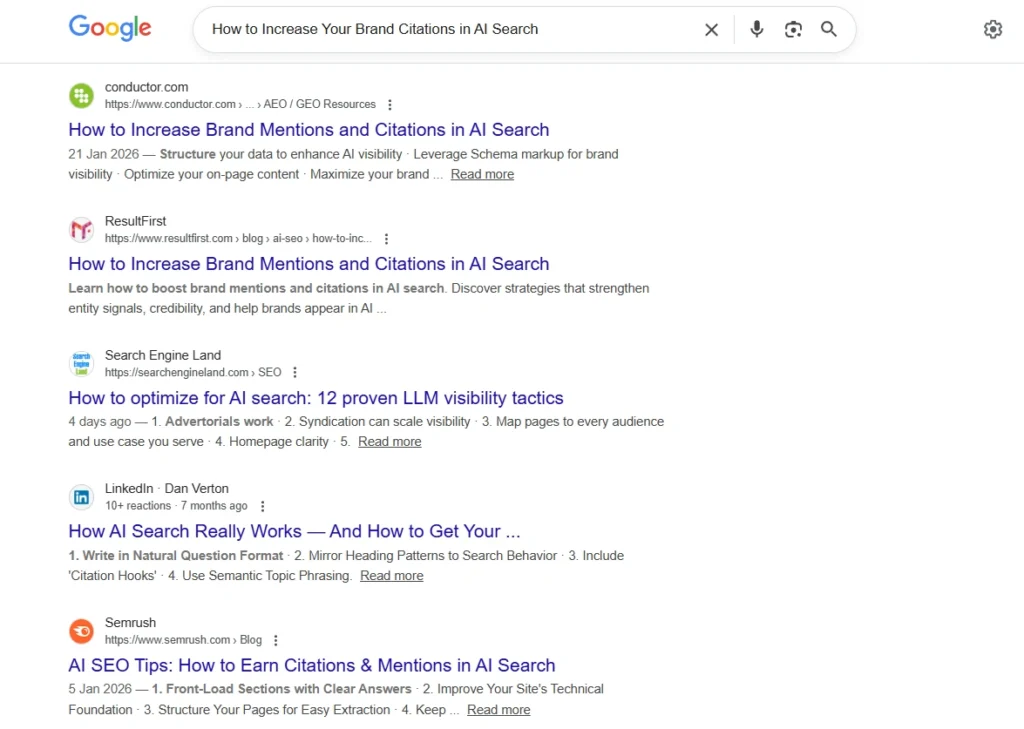

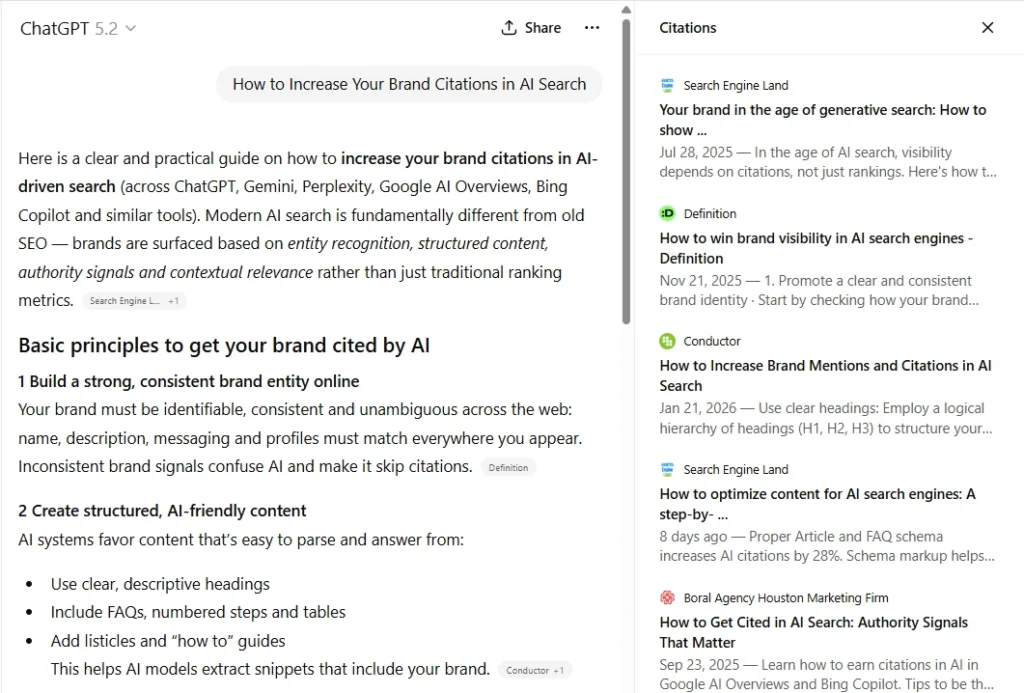

Finding #3: The Structure Filter (Why High-Ranking Sites Get Rejected)

Perhaps the most frustrating discovery for SEOs in our data was the Rejection Rate.

We found multiple instances where a website was winning the SEO game ranking #1 or #2 or Page 1 on Google for a high-volume query but was completely absent from ChatGPT’s citation list.

The “Unstructured” Penalty

When we manually audited this “rejected” winner, we noticed an obvious pattern. The sites that ranked well on Google but failed in AI often suffered from Low Extractability.

Common traits of the “Rejected” #1 Rankings:

- buried answers: The direct answer to the user’s question was hidden in the 8th paragraph after a long personal story.

- Wall of Text: 2,000 words of unbroken text with no bullet points, tables, bolding, or clear subheaders. It was like heading, 3-4 paragraphs, heading, 4-5 paragraphs and so on.

The “Clean” Alternative

In these same cases, ChatGPT often skipped the #1 result and cited a site from Position #4 or #5 (or even Page 2, as mentioned in Finding #1).

Why? Because the lower-ranking site presented the answer in a Table, a Numbered List, or a bolded definition and most importantly it answered the query first, not fluff.

The Logic: It’s About Saving Compute

Google’s bot is incredibly sophisticated at parsing messy HTML to find relevance. It has the luxury of time and history.

LLMs, however, operate under strict constraints regarding Token Efficiency and Compute Cost.

As one researcher noted in our community discussion, AI models likely enforce an “Exclusion Zone” to save resources. If a site is “messy” or requires excessive processing to extract the answer, it becomes computationally expensive.

The model doesn’t just “prefer” clean data; it effectively “mathematically prunes” high-friction sources from the selection set to save compute, moving instantly to the next cleanest, most structured option.

The Takeaway:

You can “SEO” your way to the top of Google with backlinks and history. But you must “Structure” your way into the AI response. If your content isn’t machine-readable, you are invisible to the machine.

Finding #4: The Grounding Standard (Wikipedia as the Anchor)

If you are an SEO, you are used to hating Wikipedia. It occupies the top spot for almost every definition keyword, pushing everyone else down.

In the world of AI Search, Wikipedia is even more dominant but in a different way.

The Data:

In our test, Wikipedia appeared as a citation in approximately 40% of the informational queries.

The “Trust Anchor” Theory

Why is the AI so obsessed with Wikipedia? Maybe It’s not just because the content is good; it’s because LLMs use it as a Grounding Source. It’s our theory.

Large Language Models are prone to “hallucinations” (making things up). To prevent this, the model often looks for a “Truth Anchor”—a highly trusted source that establishes the baseline facts.

- Wikipedia provides the broad, agreed-upon consensus.

- Your Site provides the specific details, the fresh data, or the “How-To” implementation.

The Pattern:

We frequently saw a citation pair that looked like this:

- Citation [1]: Wikipedia (Defining the concept).

- Citation [2]: A Niche Site (Explaining how to apply it).

The Takeaway:

Stop trying to “outrank” Wikipedia. In the AI era, you are not competing to replace Wikipedia; you are competing to be the “Actionable Companion” to it.

If Wikipedia defines what something is, your content should explain how to do it. The AI builds its answer by combining those two distinct types of data.

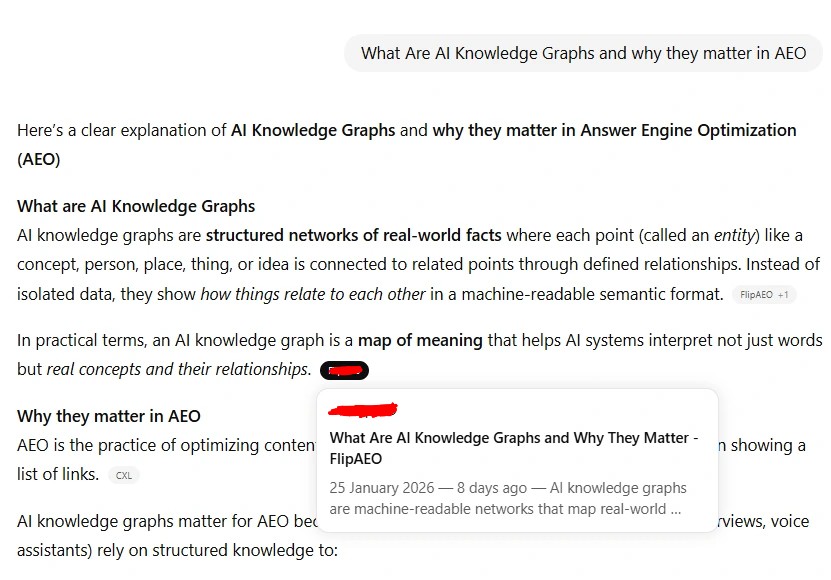

Finding #5: The “Fresh Brand” Consistency (The Great Equalizer)

One of the most persistent questions in SEO is: “Does AI have a Sandbox?”

(i.e., Do new websites have to wait months before they are trusted enough to be cited?)

To test this, we tracked a brand new website (less than 10 days old) with effectively almost Zero Domain Authority (Moz DA 1 & Ahref DR 2).

The results provided the strongest evidence yet that AI evaluates content differently than Google.

The Tale of Two Rankings

We observed this fresh brand across two different Long-Tail Queries.

- Scenario A (Convergence): For a specific low-competition query, Google ranked this new site #1 immediately (likely due to a lack of other relevant answers from authority sites).

- ChatGPT Result: Cited the site in the list but not in the answer.

- Analysis: This was expected. Google and AI agreed there was only one good answer, but ChatGPT couldn’t believe it to make it the #1 citation.

- Scenario B (Divergence – The Sandbox Breaker): For an another, slightly more competitive query, Google placed the new site at Position #17 (Page 2). Google’s algorithm likely hesitated to rank a DA 0 site on Page 1 against established competitors.

- ChatGPT Result: cited the site in its answer as the #1 Source, ignoring the 16 “more authoritative” sites above it.

Why Did This Happen?

Even though the domain was new, the content was engineered for Topical Depth. It answered the specific intent of the query with a direct, structured format that the established sites (ranking 1–16) missed.

The Insight:

Google’s algorithms are heavily weighted towards Trust & History (which hurts new sites).

AI algorithms are heavily weighted towards Semantic Fit & Structure (which treats all sites equally).

If you are a new brand, you do not have to wait 6 months to get visibility. While you are sitting in Google’s “Sandbox” on Page 2, you can be actively generating traffic from AI Search—if your content is structured correctly.

The Takeaway:

Topical Authority is a “Portable Asset.” If you truly nail the answer, the AI will find you on Page 2, 3 or 4 and pull you to the front, regardless of your Domain Age.

Finding #6: The “Authority” Overlap (Where Google & AI Agree)

We have talked a lot about where the engines disagree. But to be intellectually honest, we must also highlight where they align.

The Data:

In the majority of our queries, there was still significant overlap. On average, 3 to 5 websites that appeared on Google’s Page 1 also appeared in ChatGPT’s source list.

However, the Ranking Order was almost never identical.

- Google’s #1 (often a giant publisher) might slide down to Citation #4 in ChatGPT.

- Google’s #5 (a highly specific niche site) might jump up to Citation #1.

The Truth:

Google and AI are not enemies. They are both trying to identify “Authority.”

- If you have High Domain Authority (Backlinks) AND High Topical Authority (Content Depth), you will likely win on both platforms.

- The divergence happens when you rely only on Backlinks (Google likes you, AI tolerates you) or only on Content Depth (Google ignores you, AI loves you).

The Takeaway:

Traditional SEO is not dead. Being on Page 1 of Google is still the best way to get “into the consideration set” for the AI. But simply being on Page 1 is no longer a guarantee that you will be the answer the user sees.

Verdict: The Triangle of Visibility

So, does ChatGPT just scrape the top 3 results? Absolutely not.

Our study proves that LLMs are performing a sophisticated, multi-stage retrieval process that goes deeper than most SEOs realized. They are willing to dig to Page 2 (and beyond) to find the single best answer.

Based on this 100-query test, we can now map the “Triangle of Visibility” for the AI Era. To win the citation, you need to hit three specific targets:

- Indexability (The Ticket In): You don’t need to be #1, but you need to be in the “Consideration Pool” (likely the top 30–50 organic results).

- Topical Purity (The Relevance Signal): You must stick to your niche. The AI punished “Generalist” sites (like Reddit or News portals) and rewarded “Specialist” sites.

- Structural Trust (The Format Signal): You must present your data in tables, lists, and direct definitions. If the AI has to struggle to parse your text, it will move to the next candidate.

The Final Lesson

Stop obsessing over “Rank.”

In the world of AI Search, Rank is Vanity, Citation is Sanity.

You can be the #17 result on Google invisible to searchers but be the #1 Answer on ChatGPT, serving thousands of users who never even clicked a blue link.

The door is open. The AI is looking for experts, not just popular websites.

Are you structured to be found?