For twenty years, the formula for digital visibility was absolute: Rank on Page 1 = Win.

In 2026, that formula is breaking.

We are witnessing the most significant architectural shift in the history of information retrieval: the transition from Search Engines (Google) to Answer Engines (ChatGPT, Perplexity, Gemini).

For marketers and SEOs, this shift is violent. The algorithms that power Large Language Models (LLMs) do not care about your Domain Authority in the same way Google does. They do not care how many backlinks you bought in 2015. They do not care about your “keyword density.”

They care about Semantic Relevance.

When a user asks an AI a question, the model acts as a real-time research analyst—reading, synthesizing, and selecting the single best answer from millions of sources in milliseconds. If you are not that answer, you are not just ranked lower; you are invisible.

This guide decodes the “Black Box” of AI Search.

We will break down the technical architecture of Retrieval-Augmented Generation (RAG), explain exactly how LLMs “read” and “rank” your content, and define the new rules of Answer Engine Optimization (AEO) that will determine who survives the platform shift.

The Core Mechanism: How LLMs “Read” the Web

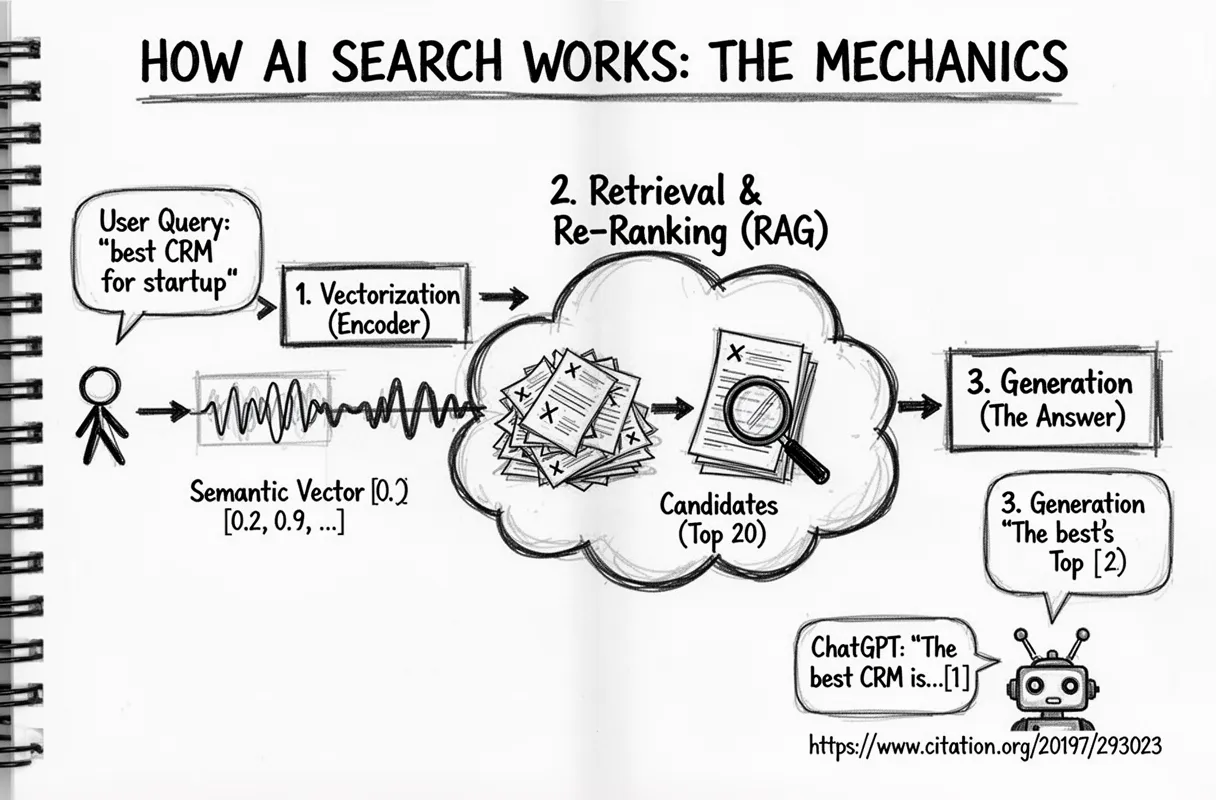

Most people assume ChatGPT “knows” the answer. It doesn’t. When connected to the web (SearchGPT), it acts as a retrieval system. It doesn’t use a “Crawler” in the traditional sense; it uses a RAG Pipeline.

Definition: Retrieval-Augmented Generation (RAG)

RAG is the process where an LLM fetches external data (from a search index or vector database) to answer a specific query outside its training data. It combines the Retrieval of facts with the Generation of natural language.

The process happens in three distinct stages. If you fail at any stage, you are not cited.

Stage 1: Vectorization (The Hunter)

This is the biggest difference between Google and AI.

Google looks for Keywords (strings of text).

AI looks for Vectors (mathematical coordinates).

When a user searches for “best crm for small agency,” the LLM converts that text into a Semantic Vector—a long string of numbers that represents the concept of the query. It plots this point in a multi-dimensional “Vector Space.”

It doesn’t just look for pages that say “CRM.” It looks for pages that exist in the same mathematical neighborhood as “efficiency,” “low cost,” “client management,” and “small team workflows.”

- The SEO Mistake: Stuffing the exact keyword 10 times.

- The AEO Fix: Covering the entire semantic neighborhood of the topic.

Stage 2: The Retrieval (The Net)

Once the vector is plotted, the system performs a Similarity Search (often using algorithms like k-Nearest Neighbors). It scans its index to find content “chunks” that are mathematically closest to the query’s vector.

It grabs the top ~20-50 candidates. These are not just URLs; they are specific paragraphs or data tables extracted from your page.

Crucial Note: If your content is buried in unstructured HTML, the “chunking” process fails, and the AI cannot retrieve the specific data point.

Stage 3: The Re-Ranking (The Judge)

This is where you win or lose the citation.

The LLM now has 20 candidates. It reads them instantly and assigns a Confidence Score to each based on:

- Information Density: Does this chunk contain high-value nouns/numbers, or is it 80% fluff?

- Structural Clarity: Is the answer easy to parse (e.g., in a list or table)?

- Consensus: Does this source agree with the “Mean Truth” found in other trusted documents?

The winners of this “Confidence Score” are fed into the LLM context window to generate the final answer. The losers are discarded.

SEO vs AEO: The Ranking Differences

To win in the age of AI, you must stop optimizing for “Clicks” and start optimizing for “Citations.”

Traditional SEO and Answer Engine Optimization (AEO) are not just different strategies; they are different sports played with different rules.

| Signal | Google (Traditional SEO) | AI / LLMs (AEO) |

|---|---|---|

| Primary Metric | Domain Authority (Backlinks) | Semantic Proximity (Vector Match) |

| Content Goal | “Keep user on page” (Time on Site) | “Answer immediately” (Extraction) |

| Structure | Long-form, narrative flow | Lists, Tables, “Answer-First” |

| Freshness | Can rank old content if authoritative | Heavily penalizes “stale” data |

| Authority | Popularity (Who links to you?) | Accuracy (Do trusted sites agree?) |

| User Intent | Navigation & Exploration | Synthesis & Solution |

The “Zero-Rank” Phenomenon

The most critical realization for modern marketers is that Ranking #1 on Google is no longer a prerequisite for AI visibility.

In the Google ecosystem, a new website enters the “Sandbox.” It has no history, no links, and therefore, no trust. It is invisible.

In the AI ecosystem, the model is probabilistic, not hierarchical. If a brand new URL contains a specific data point that perfectly answers a user’s prompt—and that data is structured in a way the AI can easily parse—the model will cite it.

- Google’s Logic: “This site is new, so I don’t trust it yet.”

- AI’s Logic: “This site has the exact answer to the vector prompt, so I will use it.”

This creates a massive opportunity for challengers to “leapfrog” incumbents. You don’t need to be the biggest brand in the room to win the citation; you just need to be the most semantically precise.

The 3 New Ranking Factors for AI (Beyond Backlinks)

If LLMs ignore backlinks, what do they measure? Through our analysis of thousands of AI-generated answers, we have isolated three critical signals that determine “Citatability.”

1. Information Density (The “Anti-Fluff” Signal)

Traditional SEO encouraged “Word Count.” We wrote 3,000-word guides because longer content generally ranked better.

AI models are the opposite. They are computationally expensive to run. Processing “fluff” wastes their tokens and money.

Definition: Information Density

The ratio of distinct facts, figures, and unique entities per 100 words of text.

If you write 500 words to explain a concept that could be explained in 50 words, the AI’s “Re-Ranking” layer assigns you a low score. It prefers content that is “Semantically Dense”—packed with nouns, numbers, and data points, not adjectives.

- Low Density (Ignored): “In the rapidly changing world of digital marketing, it is very important to consider…”

- High Density (Cited): “AEO traffic grew 40% in Q4 2025, according to FlipAEO Labs data.”

2. Structural Trust (Format for Machines)

LLMs are machines, not humans. They struggle to parse giant walls of text (unstructured data). They thrive on Structured Data.

To build “Structural Trust,” you must format your content so a bot can extract the answer without “reading” the narrative.

- Use Tables: For comparisons (Price, Features, Pros/Cons).

- Use Lists: For processes (Step 1, Step 2, Step 3).

- Use Definitions: Bold the core entity (e.g., “Generative Engine Optimization (GEO)”) immediately followed by its definition.

The Rule: If you can put it in a table, do not put it in a paragraph.

3. The “Freshness Floor”

In the old world, content was a Library Book. You could publish a great guide and let it sit for years.

In the AI world, content is a News Feed.

Because LLMs are constantly battling “hallucinations,” they are terrified of serving outdated facts. Our data shows a massive bias towards “Freshness.” If a model detects a “Content Gap” (e.g., your sitemap hasn’t changed in 6 months), it lowers the probability of citation.

- The Fix: You don’t need to write new posts every day. But you must update your existing pillars. Refresh the dates, update the statistics, and ensure the “Last Updated” schema is current.

Platform Breakdown: How to Optimize for Each Engine

Not all Answer Engines are built the same. While they all use RAG, their “personality filters” (the instructions given to the model on how to answer) differ drastically.

To dominate the landscape, you need to understand the specific “Citatability Signals” for each major platform.

1. ChatGPT (SearchGPT)

- The Persona: “The Helpful Assistant.”

- The Signal: Conversational Utility.

ChatGPT prioritizes content that solves a problem in a natural, human-like flow. It favors “How-to” guides, step-by-step instructions, and clear definitions. It is less likely to cite dense academic PDFs and more likely to cite a well-structured blog post that speaks directly to the user.

- Optimization Strategy: Write in the second person (“You”). Use clear headings that mimic natural questions (e.g., “How do I fix X?”).

2. Perplexity

- The Persona: “The Research Analyst.”

- The Signal: Citation Density & Data.

Perplexity is built to replace Google Search for knowledge workers. It is obsessed with Accuracy and Sources. It heavily favors content that contains hard data, statistics, and verifiable facts. It often cites the “original source” of a statistic rather than the blog that summarized it.

- Optimization Strategy: Be the “Primary Source.” Publish original data studies (like our FlipAEO Labs reports). Use plenty of numbers, percentages, and citations in your own text.

3. Google Gemini (AI Overviews)

- The Persona: “The Hybrid Engine.”

- The Signal: Google Ecosystem Trust (E-E-A-T).

Gemini is unique because it is tethered to Google’s massive Knowledge Graph. Unlike ChatGPT, which might trust a random but accurate Reddit thread, Gemini still leans heavily on Google’s core ranking signals (Experience, Expertise, Authoritativeness, Trustworthiness).

- Optimization Strategy: You generally still need traditional SEO fundamentals here. Strong schema markup, a healthy backlink profile, and “Author” bios are critical because Gemini validates your content against Google’s existing index.

The Hybrid Strategy

The mistake marketers make is trying to “game” one specific algorithm. The winning strategy is to build a Hybrid Moat that satisfies all three:

- Technical SEO: To satisfy Google and Gemini.

- Structured Data: To satisfy the RAG parsers of all engines.

- High-Utility Content: To satisfy the “Helpfulness” filters of ChatGPT.

The Verdict

The transition from Search Engines to Answer Engines is not a trend; it is an inevitability.

For twenty years, you wrote for humans and hoped Google would notice.

Now, you must write for Algorithms so that humans can find the answer.

We have established the rules: Information Density, Semantic Structure, and Vector Precision.

But knowing the rules is different from executing them. Writing content that satisfies these strict mathematical requirements—while still being readable for humans—is incredibly difficult and time-consuming.

FlipAEO: The First Content Engine for the AI Era

Most AI writers are built to “sound human.” They create fluff, adjectives, and empty narratives.

FlipAEO is different.

We are the first Generative Engine Optimization (GEO) Writing Platform designed specifically to engineer content for LLM retrieval. We don’t just “write blogs”; we construct Vector-Ready Assets.

- Automated Information Density: Our engine naturally strips fluff and maximizes the ratio of facts-to-words, giving you the high “Confidence Score” LLMs require.

- Native Structure: We automatically format your content with the specific tables, lists, and schema that RAG pipelines prioritize.

- Semantic Completeness: We don’t just target keywords; we target the entire vector neighborhood, ensuring you answer the intent behind the prompt.

You don’t need to be a data scientist to rank in ChatGPT. You just need a tool that writes like one.