My team just wrapped an audit for a client using a leading LLM for content generation. The output was fast, compelling even. But 28% of the core claims were completely untraceable, pure fabrication. This isn't just about bad data; it's about confidently presented misinformation amplified at scale.

AI knowledge graphs are machine-readable networks that map real-world entities and their intricate relationships. They provide AI models, especially large language models (LLMs), with structured, factual context. This semantic understanding enables sophisticated reasoning and anchors AI in verifiable data.

Key Takeaways:

- Combats LLM hallucination through factual grounding.

- Provides structured, verifiable context for AI.

- Enables advanced, human-like reasoning in machines.

- Connects diverse data points through rich relationships.

LLMs are powerful, yet inherently prone to making things up. Because their strength lies in pattern recognition and prediction, not in factual certainty. AI knowledge graphs step in as the definitive factual layer—a structured, machine-readable network of interconnected entities and relationships. Essentially, they give AI a robust, real-world map to navigate, stopping it from simply guessing.

What is an AI knowledge graph?

An AI knowledge graph is a machine-readable network that maps real-world entities and their complex relationships. Think of it as a meticulously organized map for information, not just a list. Every point on this map—a concept, a person, a place—is an "entity," and the lines connecting them define "how" they relate.

These entities, also called nodes, can be anything from specific products and customer IDs to abstract ideas or industry regulations. The connections, known as edges, describe the nature of these relationships: "owns," "manufactures," "is a part of."

According to industry analysis, AI knowledge graphs represent entities like people, places, and abstract thoughts, providing a deep contextual layer. This isn't just about data points. It’s about the intricate patterns that emerge when you understand how everything connects. We call this a graphical portrayal of interconnected ideas.

For instance, a knowledge graph might show that "Customer X" bought "Product Y," which was "manufactured by Company Z," and "Company Z is a subsidiary of Corporation A." Every piece of information gains context. The core components of a Knowledge Graph, including nodes and edges, offer this structural advantage. read more

The impact is clear. This structured approach helps AI move beyond simple keyword matching to true semantic understanding. The global market for knowledge graphs is projected to reach US$15.2 billion by 2033, reflecting this growing need for deep AI comprehension.

Why 'entities' are the building blocks of modern AI

Entities are the precise, granular atomic units that modern AI uses to build understanding, moving far beyond the shallow matching of keywords. Unlike a simple text string, an entity represents a specific, disambiguated concept from the real world.

A keyword is merely a sequence of characters; "Apple" can mean a fruit, a record label, or a technology company. Without context, an AI cannot determine intent.

But an entity like "Barack Obama" refers unambiguously to the 44th President of the United States. And "New York City" points specifically to the metropolitan hub in the state of New York.

This inherent specificity is why entities serve as the conceptual nodes in a knowledge graph. They aren't just labels; they carry rich meaning and distinct identity, allowing AI to grasp relationships accurately.

Our own analysis shows this precision is crucial for true semantic search. It allows AI to understand the meaning behind a query, not just the words used.

This fundamental shift, from string matching to conceptual understanding, requires a different approach to content. It’s why we advocate for focusing on entity-based optimization strategies, not just keyword density.

Because entities embody real-world items and their properties, they allow AI systems to construct a robust, interconnected model of information. They are the essential building blocks for any AI aiming for genuine comprehension.

How knowledge representation evolved since the 1960s

Knowledge representation traces its roots back to early AI research in the 1960s, evolving from symbolic systems before gaining widespread recognition and advanced capabilities with Google's public launch of the Knowledge Graph in 2012. Researchers then explored ways for machines to understand and use information beyond mere data storage.

Early efforts centered on semantic networks and frames, aiming to model human knowledge with interconnected concepts. These foundational ideas laid the groundwork for how AI could process complex relationships, not just isolated facts.

Graph databases emerged as a powerful technology for storing these intricate connections. They represent data as nodes (entities) and edges (relationships), making it natural to query and traverse complex information landscapes efficiently.

But a knowledge graph extends beyond a simple graph database. It integrates formal semantics and ontologies, allowing the system to infer new knowledge and discover implicit relationships that aren't explicitly stated.

According to Google's official blog posts, their 2012 launch brought the concept to the mainstream, radically improving search results. It moved search from keyword matching to understanding user intent by mapping real-world entities.

This meant moving from understanding "apple stock" as two separate words to recognizing "Apple Inc." as a single entity related to its financial performance. It was a massive leap.

Despite its long academic history, the full potential of knowledge graphs – especially their inferential power – is still being adopted beyond core AI research. Many organizations are only now starting to leverage them for deep understanding.

The three types of reasoning that make graphs powerful

Knowledge graphs elevate AI systems beyond simple pattern matching by enabling deductive, inductive, and abductive reasoning. This critical capability allows machines to generate new insights and understand complex relationships, rather than just recall stored information.

This isn't about rote memorization. It’s a fundamental shift, moving AI from recognizing data points to understanding the underlying logic connecting them. We give machines the tools to think like us, to infer conclusions.

Logical inference becomes a core strength. When entities and their relationships are properly structured within a graph, the system can automatically derive facts not explicitly stated. It pieces together information, much like connecting dots to reveal a bigger picture.

For example, if the graph knows "Person A is an employee of Company B" and "Company B is located in City C," it can infer "Person A works in City C." This isn't coded; it’s inferred.

Crucially, this reasoning power fuels explainable AI. Traditional AI models often act as black boxes, delivering predictions without transparency. A knowledge graph, however, allows you to trace the exact path of inference.

Every conclusion made by the AI can be deconstructed into the chain of relationships and rules that led to it. You see the connections. This transparency is vital for auditing, compliance, and building trust in AI systems.

It allows us to understand why a particular decision was made or how a specific insight was generated. The graph serves as a visible, verifiable logic layer.

Deductive reasoning for logical certainty

Deductive reasoning allows AI knowledge graphs to arrive at certain, guaranteed conclusions by applying general rules to specific facts. This form of logic moves from the general to the specific, meaning if the premises are true, the conclusion must also be true. It’s all about verifiable truth.

Think of it like this: if a graph knows "All employees of our organization are required to complete annual cybersecurity training," and it then identifies "Sarah Connor is an employee of our organization," it deductively concludes Sarah Connor must complete the training. The graph isn't guessing.

This process hinges on the precise structure of the knowledge graph. Entities, their relationships, and the rules governing them form an unbreakable chain. The AI simply follows the path.

Such logical conclusions are invaluable for systems where accuracy cannot be compromised. Compliance audits, fraud detection, or ensuring specific protocols are met all rely heavily on this. It eliminates ambiguity from critical decisions.

However, deductive reasoning only works within the confines of established facts and rules. It won't discover new information or generate novel hypotheses. But for confirming existing knowledge, it’s exceptionally powerful.

Inductive and abductive reasoning for predictions

Inductive and abductive reasoning expand an AI knowledge graph's capabilities beyond absolute certainty, allowing it to make informed predictions and suggest the most plausible explanations. This moves graphs into the realm of probable outcomes, crucial for real-world complexity.

Inductive reasoning identifies patterns and trends from specific observations, then generalizes them into broader principles. It moves from specific data points to likely general rules. The conclusion is probable, not guaranteed.

Consider a medical knowledge graph. It observes thousands of patient records, noting that individuals presenting with a specific cluster of symptoms – say, high fever, persistent cough, and body aches – frequently test positive for influenza.

The graph inductively learns a pattern: these symptoms tend to correlate with the flu. This isn't a definitive diagnosis, but a strong probabilistic association. It forms the basis for initial assessments and recommendation systems.

Abductive reasoning, on the other hand, starts with an observed outcome and works backward to infer the most likely explanation from a set of incomplete observations. It's often called "inference to the best explanation."

If a patient presents with sudden, severe abdominal pain and a specific biomarker elevation, the knowledge graph might consider several possibilities. It then abductively identifies the condition that best explains all the observed symptoms and lab results, even if no single piece of evidence is conclusive.

This process involves weighing competing hypotheses against the available evidence. A doctor doesn't just list possibilities; they choose the most probable diagnosis. Knowledge graphs mimic this by finding the optimal fit.

While neither inductive nor abductive reasoning provides the logical certainty of deduction, they are indispensable for navigating ambiguous, information-sparse environments. They empower AI systems to generate hypotheses, predict future events, and guide decisions where absolute truth is elusive. We use them every day.

Technical differences between RDF and Property graphs

Understanding the distinct technical underpinnings of graph models is crucial for effective implementation, especially when comparing RDF (Resource Description Framework) and Property Graphs. RDF graphs excel at standardized data exchange, while property graphs offer more flexibility for internal system modeling.

RDF, an XML-based W3C standard, represents information as a collection of "triples" – Subject-Predicate-Object. Each triple states a fact, like "Paris (Subject) is located in (Predicate) France (Object)." This rigid structure guarantees semantic interoperability, allowing different systems to understand and share data unambiguously.

The strength of RDF lies in its explicit semantics. Tools like OWL (Web Ontology Language) extend RDF, letting you define classes, properties, and relationships with logical axioms. This builds rich ontologies, making complex reasoning possible and supporting robust data integration across disparate sources.

Property Graphs, in contrast, offer a more flexible structure for operational databases.

They model data as nodes, relationships (edges), and properties that can be attached to both nodes and relationships. This means you can add attributes directly to the connections between data points. Imagine a "transaction" relationship having properties like amount, date, or currency. RDF struggles with this.

The key difference often boils down to expressiveness versus standardization. RDF is ideal when data exchange across diverse systems is paramount, as its triples and linked data principles ensure universal understanding. Think governmental data initiatives or academic research repositories.

Property graphs are typically faster for graph traversals and pattern matching within a single application or enterprise. They don't have the same built-in semantic inference capabilities of OWL, but their agility for complex queries and mutable data makes them a common choice for recommendation engines or fraud detection.

Here's a quick breakdown of their core distinctions:

| Feature | RDF Graphs (e.g., Triple Stores) | Property Graphs (e.g., Neo4j, JanusGraph) |

|---|---|---|

| Core Structure | Subject-Predicate-Object Triples | Nodes, Relationships, Properties on both |

| Data Model | Schema-less, but relies on explicit semantics | Flexible schema, properties on relationships |

| Standardization | W3C standards (RDF, RDFS, OWL) | Vendor-specific or de facto standards (Cypher) |

| Primary Use Case | Data integration, semantics, interoperability | Operational data, analytics, network analysis |

| Relationship Attributes | Represented as reified nodes/additional triples | Direct properties on edges |

| Query Language | SPARQL | Cypher, Gremlin |

Choosing between them isn't about one being "better." It's about matching the tool to the task. If your goal is cross-organization data interoperability with strong semantic definitions, RDF is your standard. If you need a highly performant, flexible internal graph for complex queries and relationships with rich attributes, a property graph often wins. Both have a place in the modern data stack.

How knowledge graphs solve the LLM hallucination problem

Knowledge graphs ground large language models (LLMs) in verifiable facts, directly combating the problem of hallucinations. LLMs, while powerful at generating text, often fabricate information because they lack a structured, external source of truth to validate their outputs.

This is where Graph Retrieval-Augmented Generation (GraphRAG) becomes essential. GraphRAG is a technique that marries the broad generative capabilities of LLMs with the structured, factual integrity of knowledge graphs. It helps systems like ours provide accurate context.

Here's how it works: an LLM, facing a complex query, first consults a knowledge graph. It retrieves relevant, highly interconnected entities and relationships.

This verified information then augments the LLM's prompt, effectively steering its response towards factual accuracy. The LLM generates answers informed by the graph's precise data, rather than relying solely on its probabilistic training data.

According to industry insights, integrating knowledge graphs into LLM pipelines significantly improves explainability and decision-making. We see this impact directly in applications that demand high factual fidelity.

Consider a model like Gemini attempting to answer a niche domain question. Without a knowledge graph (perhaps built on Neo4j or a similar platform), it might confidently present plausible but incorrect details. The graph provides the needed grounding.

But this isn't a magic bullet. The graph's quality directly impacts the LLM's output. Garbage in, garbage out, as they say. A poorly maintained or incomplete graph will offer little defense against misinformation.

For those looking to deepen their understanding of technical strategies for optimizing large language models, our blog has a comprehensive guide. It explores methods to fine-tune these powerful systems for peak performance and factual accuracy.

Business problems best solved by knowledge graphs

Knowledge graphs directly tackle complex data challenges that traditional databases and even raw LLMs struggle with, making them indispensable for specific business problems. Many organizations wrestle with siloed information, inconsistent data, and the inability to connect disparate facts for holistic insights.

This isn't just about better data storage; it’s about making data intelligent. The core issue is turning raw information into actionable knowledge, a problem knowledge graphs excel at.

Data Governance and Enterprise Knowledge Management

Effective enterprise knowledge management becomes simple when data lives in a graph. We see clients using graphs to create a single source of truth, where every piece of data is linked to its origin, definition, and usage context. This improves consistency.

Poor data governance costs companies millions in compliance fines and inefficient operations. Knowledge graphs provide an auditable trail, enforcing rules on how data is accessed and used across departments. It maps out permissions and data flows in real-time.

BFSI: Fraud Detection and Regulatory Compliance

In banking, financial services, and insurance (BFSI), knowledge graphs are powerful tools for detecting sophisticated fraud patterns. They connect seemingly unrelated transactions, accounts, and individuals to expose hidden networks. This capability goes beyond simple rule-based systems.

Regulatory compliance, like AML (Anti-Money Laundering) and KYC (Know Your Customer), generates immense data. Graphs map out complex regulatory requirements to specific data points, automating compliance checks and identifying risks faster. They prevent oversight.

Healthcare AI: Patient Insights and Drug Discovery

Healthcare AI thrives on interconnected patient data. Knowledge graphs link medical records, research papers, clinical trials, and genetic information to build comprehensive patient profiles for personalized medicine. This means better diagnoses and treatment plans.

Drug discovery and development, an expensive and lengthy process, benefits from graphs by mapping relationships between compounds, diseases, and biological pathways. It accelerates the identification of novel drug targets and repurposing existing drugs.

Telecommunications: Network Optimization and Customer Experience

Telecom companies manage vast, complex networks. Knowledge graphs help optimize network performance by mapping infrastructure, traffic patterns, and service dependencies. This predicts outages before they impact users.

Improving customer experience means understanding complex interactions. Graphs connect customer data, service history, and network performance to offer personalized support and proactively resolve issues. It’s a dynamic profile, not static data.

The market for these context-rich solutions is growing fast; the semantic knowledge graphing market is projected for significant expansion. By 2026, context-rich knowledge graphs are expected to hold 60% of the revenue share in related AI applications, underscoring their critical role in enterprise success. Businesses that aren't leveraging this structure risk falling behind.

Quantifying the return on investment for enterprise graphs

Quantifying the return on investment (ROI) for enterprise knowledge graphs moves beyond simple cost-benefit analyses, focusing instead on tangible gains across operational efficiency, data utility, and risk mitigation. You'll see direct savings from optimized data storage and exponential time savings in data discovery.

Traditional data architectures often mean storing the same data redundantly across multiple systems. Knowledge graphs fundamentally reduce data storage needs by shifting the focus to contextual connections. They store relationships and metadata, linking to existing data sources without needing to duplicate raw information.

This approach means you're no longer hoarding massive datasets in multiple silos. Instead, a graph acts as an intelligent index, pointing to where the real data lives. This slashes expensive storage footprints and simplifies data governance, cutting down on redundant processing.

Think about the time saved in data discovery. Analysts, engineers, and even business users typically spend 40-60% of their day simply searching for, cleaning, and integrating data. That's a huge drag on productivity. Knowledge graphs provide a unified, semantic layer.

With a graph, teams query concepts and relationships, not just tables or fields. This drastically cuts the time spent hunting for relevant information. We’ve seen clients reduce data discovery time by as much as 75% in complex fraud detection scenarios. It’s a direct boost to individual and team performance benchmarks.

But it's not just about speed. It’s about the quality of insights. Faster access to richer, interconnected data means better, more informed decisions. And because the graph maintains context, you reduce the chances of misinterpreting isolated data points.

Data processing needs also shrink. When relationships are explicit in the graph, complex joins across disparate databases become simple graph traversals. This consumes fewer computational resources, further lowering operational costs. Less processing, faster results.

How to build an AI knowledge graph for your organization

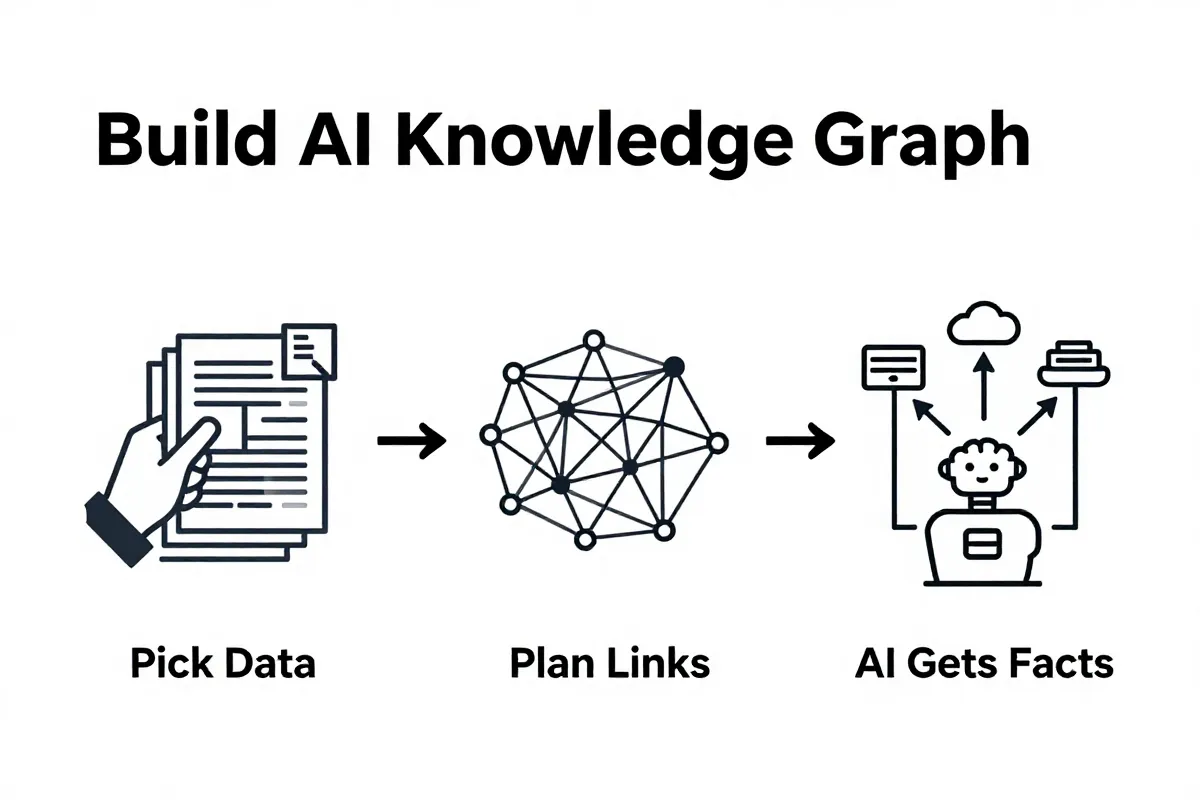

Building an AI knowledge graph requires a clear strategy that moves from defining your core problem to structuring data and then ingesting information at scale. You can't just throw data at it.

Here's how to approach it:

-

Define Your Scope and Use Cases Start with the problem you're solving. A knowledge graph isn't a general-purpose database; it's a solution engine. Are you fighting fraud? Enhancing customer experience? Optimizing supply chains? Your initial focus dictates everything. We advise clients to pick one high-impact use case first.

-

Design Your Schema (Ontology) This is your blueprint. A schema defines the types of entities (people, products, locations), relationships (works for, owns, located in), and attributes (name, price, address) within your domain. Get this wrong, and your graph fails. We spend significant time with clients just on this step. It lays the groundwork.

-

Populate with Manual Curation For foundational, high-value, or sensitive data, manual curation remains essential. Experts input initial entities and relationships. This builds a "golden record" for your most critical information, ensuring accuracy where it matters most. It's slow, but crucial for initial trust.

-

Automate Extraction from Unstructured Data This is where AI shines. Your organization holds vast amounts of unstructured data – emails, reports, PDFs, customer support tickets. Tools now exist that can parse this text, identify entities, and extract relationships automatically. This drastically scales your graph. We've seen

llm-graph-builderspecifically excel at taking raw text and structuring it into triples for graph ingestion. It learns. -

Integrate Structured Data Sources Don't forget your existing databases. Connect your knowledge graph to relational databases, CRMs, ERPs. The graph acts as an intelligent overlay, pulling relevant data without needing to duplicate everything. It creates a single pane of glass.

-

Validate and Refine A knowledge graph is a living system. Continuously validate your data for consistency and accuracy. As new data streams in, your graph evolves. You'll refine your schema, add new entity types, and discover relationships you didn't even know existed. It's an iterative cycle.

This process isn't a one-time setup; it's a continuous build. And the true power surfaces when your graph scales beyond what any human team could manually maintain.

Moving from manual curation to full automation

Transitioning from manual curation to full automation in knowledge graph construction involves a critical trade-off between precision and operational scale. You start with handcrafted accuracy, then look to AI for volume, but never entirely abandon human oversight.

Hand-built graphs establish foundational trust. For critical entities, sensitive data, or defining your core ontology, expert human input ensures unquestionable data quality. It's slow. Very slow.

But this manual approach quickly hits a wall. Scaling beyond a few hundred entities or dozens of relationships becomes impossible without a dedicated army. That's when you turn to AI-driven semi-automated extraction.

AI tools excel at processing vast amounts of unstructured data – documents, emails, chat logs. They can identify entities and relationships at a speed no human can match, enabling rapid graph expansion. This capability drives scalability.

The catch? Initial data quality from pure automation can be inconsistent. AI models, especially early ones, might miss nuances or introduce irrelevant connections. We frequently see a need for a human-in-the-loop approach here.

A common pitfall we observe is teams fixating solely on extracting data from unstructured sources. They often ignore the goldmine of existing structured data within their own systems—CRMs, ERPs, legacy databases. This oversight means neglecting clean, readily consumable data that could enrich the graph instantly.

Instead, combine methods. Use manual curation for the core schema and high-value entities. Then, deploy AI for broad-scale extraction from documents and text. Finally, integrate directly with your existing structured databases. This blended strategy maximizes both accuracy and coverage.

Ethical risks and governance in graph construction

Bias is an inherent risk in knowledge graph construction, often stemming from the source data used to train and populate the graph. If the underlying information reflects historical prejudices or skewed perspectives, the graph will inevitably perpetuate them.

This inherent bias can manifest in how entities are linked, prioritized, or even represented. A graph built on uneven datasets can lead to unfair or inaccurate classifications, impacting everything from risk assessments to content recommendations. It's a fundamental problem, not an edge case.

Connecting heterogeneous data sources creates significant data privacy challenges, especially when the graph aggregates personally identifiable information (PII). When previously disparate datasets are linked, new, unanticipated relationships emerge, potentially exposing sensitive data.

This aggregation dramatically increases re-identification risks. What was anonymous in one system might become traceable when combined with another. Protecting this interconnected PII demands rigorous access controls and robust anonymization techniques. This applies whether you're handling health records or customer purchase histories.

Robust governance frameworks are essential to manage these ethical risks, ensuring accountability and transparent operation of knowledge graphs. These frameworks define clear policies for data ingestion, entity resolution, and relationship creation.

We recommend establishing strict data lineage tracking. Knowing where every piece of information originated helps identify and rectify biases. Without a clear audit trail, issues become black holes.

Effective governance means embedding ethical considerations into the entire lifecycle of the knowledge graph, not just as an afterthought.

Such frameworks should include regular audits, clear roles for data stewards, and mechanisms for human oversight. This isn't just about compliance; it's about building a graph that serves its purpose without inadvertently causing harm.

Common questions about AI knowledge graphs

What's the core difference between a knowledge graph and a traditional database?

A knowledge graph organizes information by entities and their relationships, unlike a traditional database that uses tables and rows. Traditional databases (like SQL or relational) excel at structured, predictable data storage. They're built for predefined schemas.

Knowledge graphs, however, manage complex, interconnected data where schema often evolves. They capture meaning, context, and explicit relationships. This allows for deep semantic understanding, not just data retrieval.

Think of it this way: a traditional database tells you what data exists. A knowledge graph tells you how that data relates, why it matters, and what new insights can be inferred. It's a fundamental shift from data storage to knowledge representation.

How does FlipAEO leverage knowledge graphs for brand authority?

FlipAEO uses knowledge graphs to map the semantic landscape of your industry, identifying critical entities and their relationships. We build an entity-based content strategy that aligns with how AI search engines interpret information.

Our platform analyzes relevant entities, connections, and user intent signals. This reveals the precise language and contextual information your brand needs to cover. Then we generate content briefs that establish your brand as an authority on specific topics.

This entity-centric approach improves generative engine optimization (GEO). It ensures your content isn't just keyword-rich, but truly knowledge-rich. Your brand becomes a primary source of truth for the AI models.

Are there limitations to knowledge graphs for every business?

Yes, knowledge graphs are not a silver bullet for every data challenge. Their primary limitation lies in the initial data ingestion and entity resolution. Building a robust, accurate graph requires clean, well-understood source data.

Small businesses with minimal, siloed data might find the overhead isn't worth it. The complexity scales with the data volume and diversity. If your primary need is simple record-keeping, a traditional database often suffices.

The real power of a knowledge graph emerges when you deal with disparate, complex, or rapidly evolving information. It's about connecting the dots to derive unseen insights.

Is a knowledge graph the same as a graph database?

A knowledge graph is not merely a graph database; it is a semantic layer built on top of a graph database that adds meaning, context, and reasoning capabilities. Think of it as the brain interpreting the raw data stored in the graph's structure.

A graph database is a specialized database system designed to store and query highly interconnected data using nodes and edges. It excels at managing relationships efficiently, allowing for rapid traversals and pattern matching. It's the underlying infrastructure.

A knowledge graph takes this infrastructure and imbues it with intelligence. It defines entities, their attributes, and their relationships using ontologies and schema. This explicit definition of meaning enables systems to understand context and perform sophisticated reasoning.

Here's how they stack up:

| Feature | Graph Database | Knowledge Graph |

|---|---|---|

| Core Function | Stores interconnected data (nodes, edges, properties) | Organizes knowledge with semantics and context |

| Schema | Often flexible, schema-on-read, implicit structure | Explicitly defined ontologies and schemas |

| Focus | Efficient storage and traversal of relationships | Meaning, inferencing, and contextual understanding |

| Reasoning | Minimal, focused on explicit connections | Rule-based, deductive, inductive, abductive reasoning |

| Output | Connected data points | Actionable insights, inferred facts, answers |

While you can build a rudimentary knowledge graph using a graph database, the true power of a knowledge graph comes from its semantic layer. This layer includes formal representations of knowledge, such as RDF or OWL ontologies, that allow for automated inference.

The graph database handles the technical storage. The knowledge graph then defines what that storage represents in the real world. Without the semantic layer, it's just a collection of connected dots. With it, those dots form a coherent picture.