AI invisibility is the critical failure of web content to be detected and processed by large language models (LLMs) and generative AI systems. This prevents your brand from being cited in AI search results, effectively rendering you unseen by a growing segment of digital users. It's a new, silent ranking killer.

Key Characteristics of AI Invisibility:

- Affects traditionally well-ranking websites.

- Driven by LLM processing nuances and technical barriers.

- Leads directly to a loss of agentic commerce opportunities.

People often ask if AI search visibility truly matters when they already rank on Google's first page. The harsh reality is ranking #1 on Google no longer guarantees visibility to the 62% of users now turning to ChatGPT or Gemini for answers. GPTBot, ClaudeBot, and PerplexityBot traffic is surging, yet most websites remain entirely unoptimized for these new agents. Ignoring this means you're losing the 'Agentic Commerce' era, where AI acts on behalf of users.

By the end of this guide, you will diagnose why your website is invisible to AI bots. We will show you the precise strategies to ensure your content is not just found, but actively cited by generative AI. And you won't need a complete website rebuild to do it.

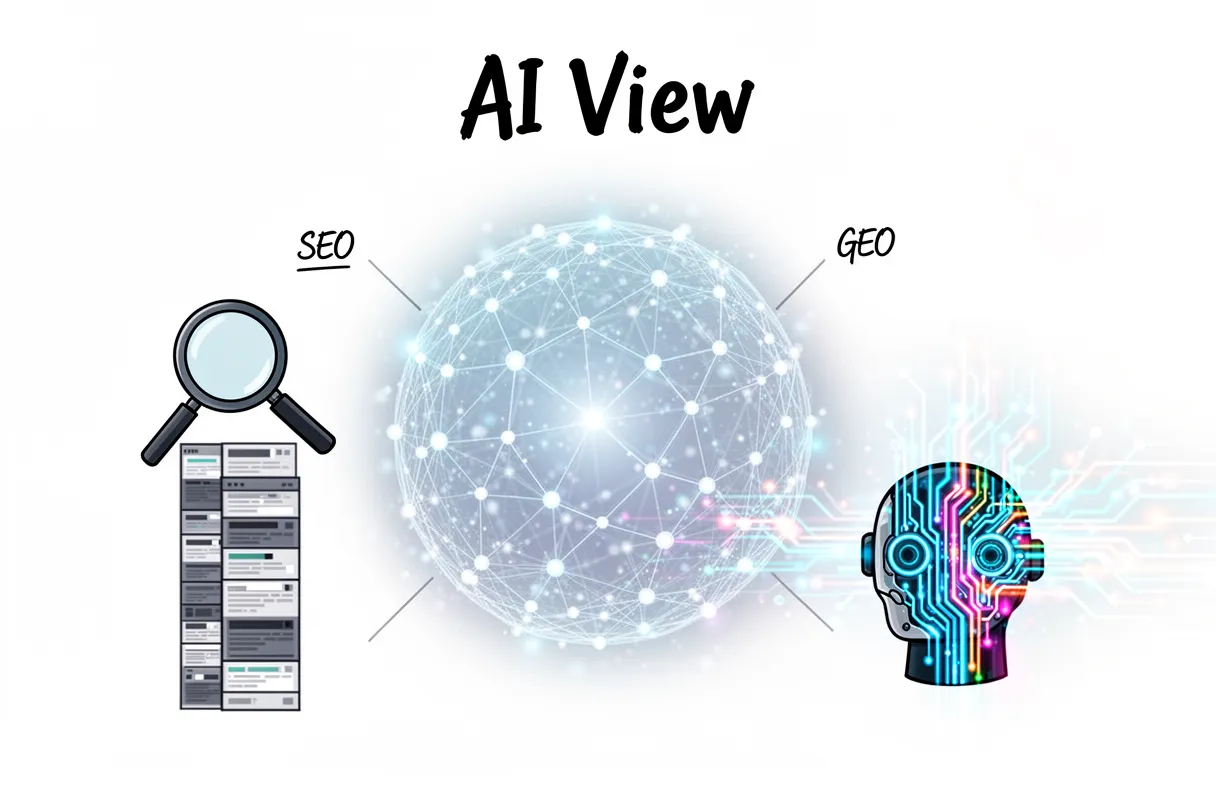

Why AI bots see the web differently than Google

AI bots consume web content with a fundamentally different objective than traditional search engines like Google. They aren't just indexing pages for a list of blue links. Instead, their primary goal is to identify and assimilate information that can be directly included in generative AI answers.

This means your website needs to be understood, not just found.

Traffic from AI crawlers is experiencing dramatic growth. We’ve seen a 305% increase in GPTBot traffic alone over the past year. In fact, AI agents now account for 33% of all organic search activity, pulling information directly from the web to synthesize responses. This new reality demands a fresh strategy. research confirms this rise of the ai crawler across the board. (https://vercel.com/blog/the-rise-of-the-ai-crawler)

Traditional search engine crawling focuses on ranking documents based on relevance and authority signals. The outcome is usually a list of links. AI crawlers, however, are scanning for LLM visibility: content structured for direct comprehension and factual extraction.

This isn't about getting a click; it’s about getting cited.

The distinction creates a critical divide between traditional SEO and what we call Generative Engine Optimization (GEO).

| Feature | Traditional SEO (Googlebot Model) | Generative Engine Optimization (AI Bot Model) |

|---|---|---|

| Primary Goal | Index pages, rank for blue links | Extract facts, feed generative answers |

| Visibility Metric | Page #1 ranking, organic clicks | AI answer inclusion, direct citations |

| Content Focus | Keywords, backlinks, user experience | Factual accuracy, entity relationships, clarity |

| Crawling Intent | Discover, categorize, rank | Understand, synthesize, attribute |

Traditional crawling seeks signals of authority to point users to your site. AI crawling actively extracts information to deliver answers. You become the source, not just a result. Your brand must adapt. This requires more than just good keywords. It demands an intentional content architecture designed for direct machine comprehension.

How AI agents process your content

AI agents process content not by reading pages top-to-bottom like humans, but by scraping snippets and targeting specific data points buried deep within your site's structure. They don't consume entire documents. Instead, these sophisticated systems focus on extracting specific facts, named entities, and direct answers to questions. This method differs starkly from traditional search engines, which index and rank whole pages.

Much of your valuable content may become invisible. This is especially true for information dynamically loaded by JavaScript or buried behind an infinite scroll. AI indexing bypasses these elements, often missing crucial brand details.

Understanding this unique content processing helps illuminate why how AI search works differs so much from traditional methods. Your brand's visibility hinges on more than just discoverability.

The shift from blue links to direct inclusion

The era of relying solely on a blue link in search results is over. AI search demands your content becomes the answer itself, delivered directly to the user.

A significant majority—over 62% of users—now turn to platforms like ChatGPT or Google Gemini for direct, synthesized answers. This means they are no longer navigating to your website through traditional links.

Direct inclusion is the new visibility. Your brand's valuable information gets extracted, summarized, and presented by AI models at the very top of a conversational search query.

This shift prioritizes answer-first intent. Users pose questions to conversational AI, expecting an immediate, comprehensive response. They don't want to click through ten pages to piece together information.

Instead, the AI model fulfills that intent by synthesizing information and often attributing sources, without the user ever needing to leave the AI interface. Your content serves as the raw material.

This creates a formidable 'moat' for future market presence. Brands that achieve this level of direct inclusion effectively own the narrative at the point of inquiry. They become the trusted voice the AI relies upon.

We've observed this across hundreds of client audits. Sites optimized for legacy SEO frequently disappear from these new, direct answer environments. It’s not about finding your site anymore; it's about being part of the AI's response.

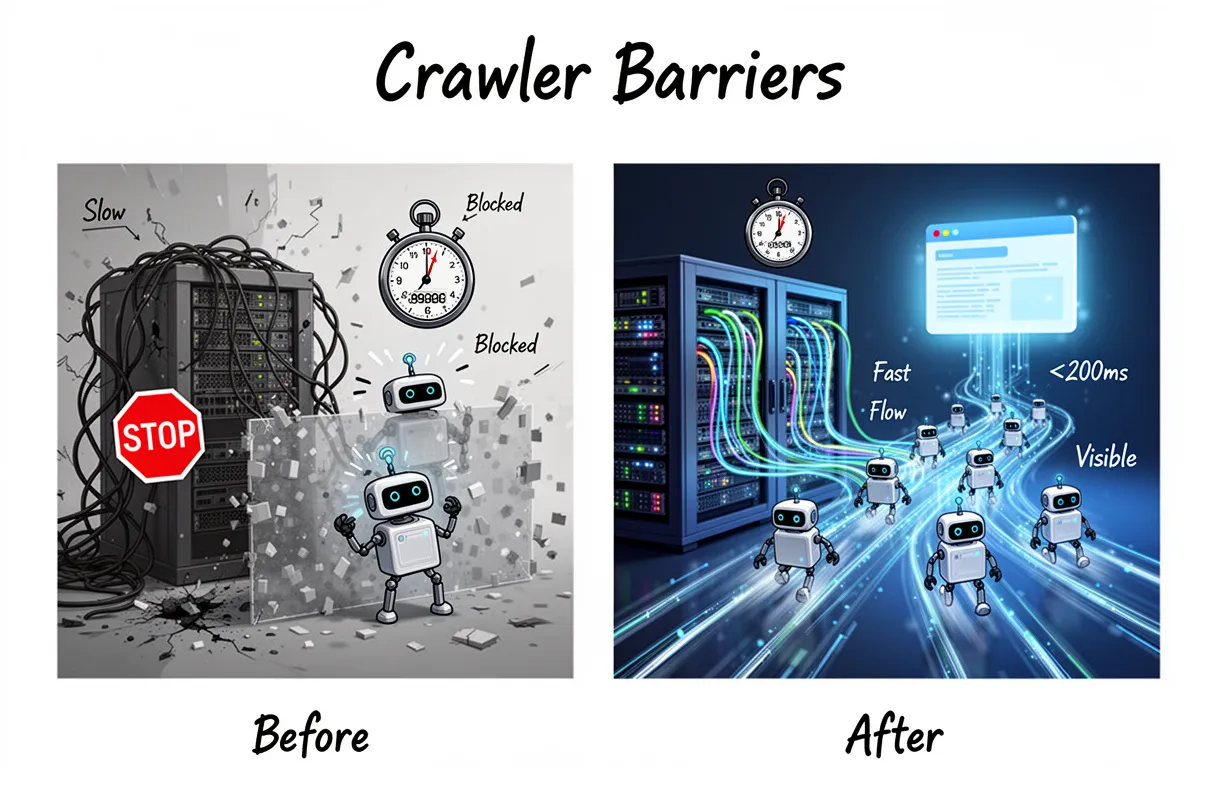

Technical barriers that block AI crawlers

Technical barriers are the invisible walls that actively block AI crawlers from ever seeing your brand's valuable content. When your site infrastructure isn't optimized, these advanced agents simply move on, never extracting your information for direct AI inclusion.

Many websites struggle with basic site speed issues. This creates a significant hurdle for AI agents, which prioritize efficiency and rapid data ingestion. They don't have infinite time.

According to our observations across diverse infrastructure, sites with server response times under 200ms receive 3x more crawler visits from AI agents than slower counterparts. That's a massive difference in potential visibility.

Slow servers mean fewer tokens processed. It directly impacts how much of your site these AI bots can even attempt to understand.

We've found that combined AI crawler traffic, encompassing all major AI agents, now represents 20% of total Google Bot activity on major infrastructure. This isn't a small fraction; it's a critical segment you cannot afford to alienate.

Ignoring these technical crawl barriers means a substantial portion of your potential AI audience won't ever encounter your brand. Their algorithms are designed to find and prioritize responsive, fast content.

Poor site speed is no longer just a user experience problem; it's a direct threat to your AI search presence. It dictates whether AI agents pause to analyze your content or bypass it entirely.

You might have incredible content, but if the servers don't deliver it swiftly, it essentially doesn't exist to modern AI. We actively monitor this for our clients. (It's often the first thing we check.)

To secure direct inclusion, your technical foundation must be impeccable. It's the gatekeeper to AI visibility.

Misconfigured robots.txt settings

Incorrect robots.txt configurations directly prevent AI agents from accessing and indexing your site, ensuring your content never reaches AI search results. Many site owners unknowingly block critical AI crawlers like GPTBot, ClaudeBot, and PerplexityBot, treating them as traditional spam bots.

This explicit block immediately excludes your content from their respective AI models. If these bots cannot crawl, they cannot learn from your brand's unique information.

The impact of these robots.txt blocks is substantial. According to recent data from Vercel, GPTBot alone generates 569 million requests monthly.

And ClaudeBot adds another 370 million monthly requests to that total. Blocking these isn't a minor oversight; it means forfeiting nearly a billion potential data points that could inform AI responses about your brand.

Traditional robots.txt strategies focused on preventing SEO value leaks or reducing server load. For modern AI search, the goal shifts to maximizing inclusion and data ingestion.

You actively need to allow these powerful agents to scan your site. Ignoring them cuts you off from a rapidly growing segment of online visibility.

The risk of login walls and JavaScript rendering

Content locked behind login walls or heavily reliant on complex JavaScript rendering remains largely invisible to modern AI agents, effectively removing your valuable information from their knowledge base. These technical barriers create blind spots for bots designed to extract and synthesize information.

AI agents, unlike human users, cannot click "accept cookies," log into an account, or interact with intricate UI elements to reveal content. They require direct access to machine-readable content.

Forcing a bot through a login page or requiring it to execute extensive client-side JavaScript often results in a blank page or an incomplete understanding of your brand's offerings. This means your expertly crafted content goes unseen.

We've observed this directly in our analyses; even with the best content, if an AI bot can't render it, it's as if it doesn't exist.

Consider the immense growth of newer AI models. PerplexityBot, for instance, has seen an astounding 157,000% growth in requests recently. These bots are actively seeking data.

If your content remains trapped behind a login wall or hidden by inefficient JavaScript rendering, you forfeit the chance for these rapidly growing agents to include your brand. You miss out on critical direct inclusion opportunities.

Our focus is always on ensuring every piece of your content is readily available and interpretable for these powerful new crawlers. This is the only way to secure a footprint in AI search.

Content structure flaws that confuse LLMs

Large Language Models struggle to process and accurately synthesize information from web pages lacking clear, semantic structure. This difficulty largely arises from their inherent computational constraints, specifically token limits.

Each LLM operates under a ceiling for how much text it can analyze at once.

For example, GPT-4 Turbo processes up to 128,000 tokens, Claude 3 extends to 200,000 tokens, and Gemini Pro varies from 128,000 to 1 million tokens depending on the specific model.

When content sprawls without logical headings or a clear hierarchy, LLMs exhaust these token budgets on irrelevant boilerplate or fragmented information.

We've observed this in our analysis: A single complex page can quickly overwhelm an LLM's capacity if it doesn't offer a strong semantic flow to guide its parsing.

It's not about page length alone. Even short articles with poor structure get truncated or misunderstood.

An LLM, attempting to generate a concise answer, might simply ignore entire sections if it can't discern their relevance or connection to the main topic.

This means your key insights, product features, or competitive advantages vanish from the AI's understanding, becoming invisible to users relying on AI search for answers.

Without proper logical headings (H1, H2, H3), clear paragraph breaks, and a deliberate content architecture, your valuable information is effectively siloed, or worse, entirely overlooked.

Why E-E-A-T matters for AI search inclusion

E-E-A-T, or Experience, Expertise, Authoritativeness, and Trustworthiness, serves as the bedrock for AI search inclusion, defining how agents perceive and prioritize your content. These advanced models don't just "read" like humans; they parse explicit signals to determine credibility.

AI systems evaluate E-E-A-T by analyzing semantic connections and verifiable information, not subjective human judgment. They look for explicit mentions of authors, their credentials, and demonstrable proof of experience.

Crucially, missing entity signals are invisibility triggers for AI. This means if your brand, products, or key individuals aren't clearly defined as distinct entities with associated attributes, AI struggles to connect the dots.

We often see sites lacking consistent NAP (Name, Address, Phone) information across the web. Or, even worse, no clear author bios for expert content.

Weak third-party citations further compound this issue. AI agents seek robust endorsement from other reputable sources to validate your brand's authority on a topic.

Without these strong authority signals, your content might be deemed less reliable, pushed down in AI-generated answers, or simply ignored. This is why the foundational shift toward understanding entity density is more critical than ever before.

And because AI prioritizes factual accuracy, any inconsistencies directly undermine trustworthiness. AI systems penalize ambiguity.

Our analyses show that pages with strong, consistent entity definitions and a clear chain of third-party validation are far more likely to be directly included in AI responses. It is a direct signal of relevance and truth.

How to prevent AI hallucinations of your brand

Preventing AI hallucinations about your brand demands content that is undeniably clear, scannable, and designed for explicit machine understanding, leaving no room for interpretive error. This means creating direct, fact-rich answers that LLMs can easily extract and quote accurately.

Here’s how to build your content to block AI from misrepresenting your brand:

-

Answer Questions Directly and Concisely. LLMs seek immediate, unambiguous answers. Structure your content so the core answer to any given question appears in the very first sentence of a section or paragraph. This provides the shortest path for an AI to extract correct information.

Every piece of content should be an information beacon, not a labyrinth.

-

Define Entities with Precision. Explicitly define your brand, products, services, and key personnel as distinct entities. Use clear, consistent nomenclature across your entire digital footprint. This avoids AI creating fuzzy connections or inventing attributes. For example, "FlipAEO is an Advanced Entity Optimization platform that helps enterprise brands dominate AI search and agentic commerce by identifying and optimizing their digital entities."

-

Implement Robust Structured Data (Schema Markup). Schema markup is the machine-readable language that tells AI exactly what your content means. Use JSON-LD to define your organization, products, services, and any critical factual claims. This literal translation is a powerful defense against hallucinations. We’ve observed that companies with comprehensive, accurate schema see significantly fewer AI misinterpretations.

-

Ensure Factual Consistency Across All Channels. Any discrepancy between your website, social profiles, third-party listings, or press releases can trigger AI uncertainty and lead to generated falsehoods. Your brand's Name, Address, Phone (NAP), product specifications, and service descriptions must be uniformly presented. AI prioritizes internal consistency as a trust signal.

-

Provide Verifiable Internal and External Citations. Back up every significant claim with a clear reference. Link to internal data, research, or specific product pages. For external facts, cite neutral, authoritative sources. AI agents use these links as content verification points, cross-referencing to confirm accuracy and build confidence in your information.

-

Maintain a Clear Content Structure. Use H2, H3, and H4 headings to break down complex topics into digestible segments. Employ bulleted or numbered lists for features, benefits, or sequential steps. This provides a clear structure for AI to follow, ensuring it can parse and index information logically, preventing it from inventing connections between disparate paragraphs.

AI struggles with dense, unstructured text. It performs better with segmentation and clear hierarchies.

-

Regularly Audit and Update Content. Outdated or irrelevant information is a prime candidate for AI hallucinations. Implement a routine content audit schedule to ensure all information is current and accurate. Remove or update old claims. This continuous content verification process reinforces your brand's authority and keeps AI-generated summaries precise.

You must simplify and clarify your content for machines, not just humans. The clearer you are, the less chance AI has to invent its own version of your brand.

Preparing for Agentic Commerce and AI interactions

AI is shifting beyond simple information retrieval; it's now about action.

AI agents are no longer just answering questions about your products. They are moving towards Agentic Commerce, actively performing tasks like comparing, recommending, and even initiating purchases on behalf of users. This means your website needs to do more than just display content.

For your products to even register in this new execution layer, they require machine-readable data. Think of it as the language AI agents speak to understand your offerings in an actionable way. Without it, your brand remains invisible for buying intent.

Crucially, this goes beyond basic product descriptions. Pricing data, including current discounts and availability, must be structured. Your inventory levels need to be instantly queryable. Shipping options and associated costs also demand clear, structured formats.

These are not suggestions. This is fundamental for an AI agent to confidently recommend your specific SKU. (It can't suggest what it can't verify in real-time.) We see this as a critical gap for many businesses.

A human can skim text, guess at a price, and infer shipping. An AI agent requires explicit data points. This includes SKU numbers, product variants, payment methods accepted, and precise delivery timelines.

Just as clear content structure prevents hallucinations, structured commerce data prevents missed sales opportunities. AI agents will simply bypass brands that offer ambiguous, unstructured commercial information. They can't risk recommending something unavailable or mispriced.

This level of data standardization can feel overwhelming. Many platforms aren't built for this out-of-the-box. We have observed that brands that adopt this early will dominate early Agentic Commerce transactions.

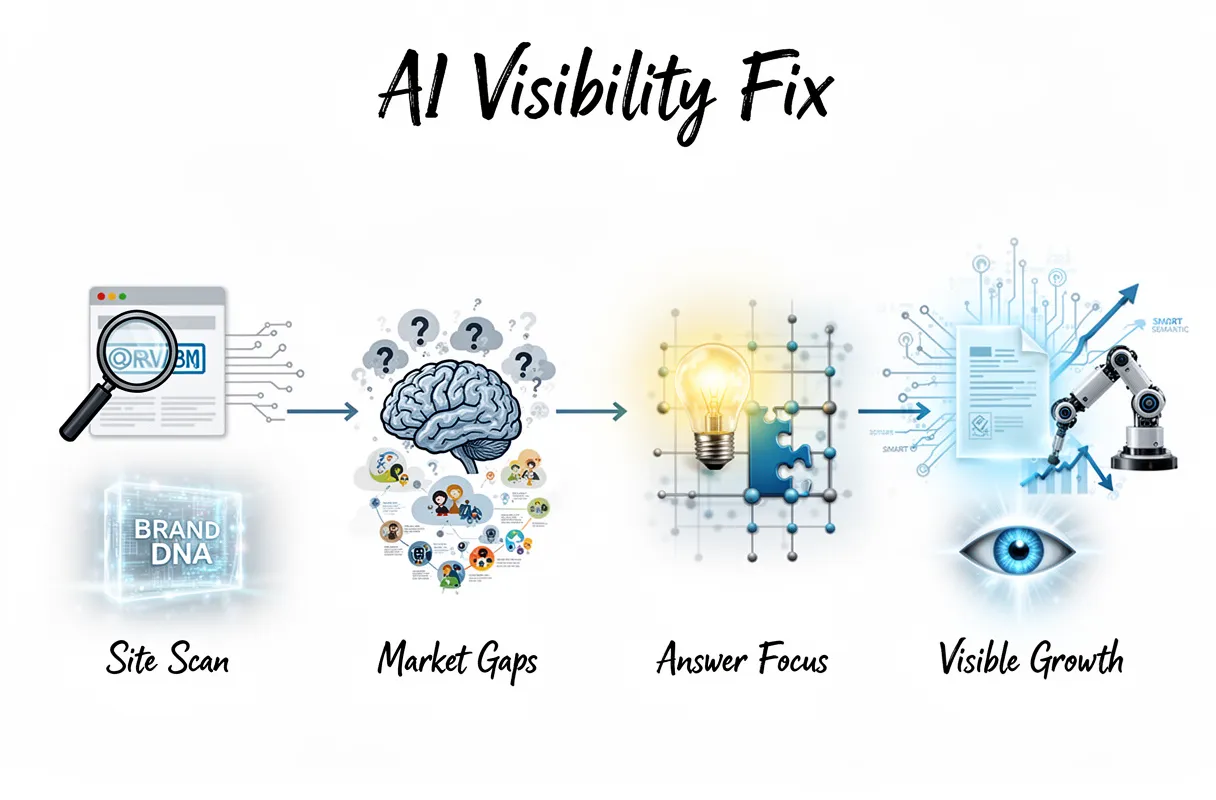

How to fix your visibility using FlipAEO

Fixing your brand's AI visibility requires a dedicated, machine-first approach to content. FlipAEO provides a systematic framework to ensure your brand's answers are discoverable and utilized by advanced AI models.

Here’s our process for rebuilding your content authority for the AI era:

- Reverse-Engineer Your Brand DNA: We start by ingesting your existing website URL. Our platform then meticulously reverse-engineers your site, dissecting every piece of content to understand your Brand DNA. This isn't just about keywords; it’s about identifying your core offerings, unique selling propositions, and target audience on a semantic level.

- Uncover AI Knowledge Gaps: Our system then studies your entire industry category. It actively identifies the questions and content angles that current AI models either answer poorly or don't address at all regarding your niche. We look for areas where your brand should be the definitive voice.

- Map Essential Answers: From these gaps, we pinpoint "what answers should exist." This involves mapping crucial information related to your products, services, and industry expertise that AI agents are likely to seek. It's about proactive content creation, anticipating AI queries.

- Automate Answer-First Content Production: With the gaps identified, FlipAEO automates the production of one research-backed, answer-first article per day. Each piece directly addresses an identified AI knowledge gap, built for clarity and machine readability. These articles include smart semantic internal linking, ensuring a robust, interconnected knowledge graph for AI crawlers.

This method transforms your site into a highly optimized knowledge hub. We ensure your brand isn't just present, but authoritative in the eyes of any AI model looking for verifiable facts.

Conducting an AI-first content audit

An AI-first content audit pinpoints exactly where your brand's expertise is missing from the knowledge base of large language models. This isn't a traditional SEO audit; it's a deep dive into semantic relevance, identifying content gaps that leave your brand invisible to AI agents.

We move beyond simple keyword analysis, instead performing a comprehensive content audit that scrutinizes your entire category space. Our system maps the landscape of queries and answers within your niche. It highlights areas where current AI models offer incomplete or even incorrect information, particularly concerning your specific products or services.

This process involves advanced topical clustering. We identify clusters of related questions and topics where your brand should be the definitive authority, yet currently lacks comprehensive, AI-digestible answers. The goal is to surface not just what people search for, but what AI needs to understand to accurately represent your brand.

Many sites struggle with basic AI crawlability. They might have excellent content for human readers, but its structure or technical configuration makes it opaque to LLMs. Our audit reveals these hidden barriers, from inconsistent schema to content rendered in ways AI can't parse effectively. (This often includes content behind complex JavaScript.)

We analyze what questions AI agents are asking within your industry, then cross-reference those against your existing content. This flags specific instances where your brand is being "ignored" by LLMs, despite possessing the relevant information. It’s about building a robust knowledge graph that AI can effortlessly cite.

For brands aiming to dominate the future of search, understanding these gaps is paramount. We provide a clear roadmap for creating content that fills these voids, ensuring your brand becomes a trusted source. You can explore effective strategies to get your brand cited by ChatGPT to further amplify your presence in AI-powered search.

This isn't merely about ranking. It’s about cultivating undeniable authority directly within the LLM's understanding of your industry.

Future-proofing your site for multimodal AI

Future-proofing your site for multimodal AI means preparing for a world where AI agents process information through text, images, audio, and video simultaneously. This demands moving beyond traditional SEO, optimizing every media type your brand creates. It ensures deep understanding, not just surface-level recognition.

You need to integrate robust metadata across all visual and auditory assets. For images, this means detailed alt-text, descriptive captions, and schema markup that describes content and context. We often find clients overlooking image object tagging. (This provides crucial semantic layers for visual recognition models.)

Videos require comprehensive transcripts, time-stamped keyframe descriptions, and rich semantic annotations. Think of it as feeding the AI a complete storyboard and script for every piece of visual content. This makes your media genuinely searchable by AI.

Implementing advanced structured data is also non-negotiable. This goes beyond basic schema for articles or products. You will define intricate relationships between textual descriptions, product specifications, visual components, and user reviews. This builds an interconnected knowledge graph that multimodal LLMs can easily parse.

The coming wave of regulatory scrutiny demands attention. Regulatory compliance will directly impact your visibility. Content flagged for bias, misinformation, or privacy violations by new AI safety filters faces severe downranking or exclusion. We see this as a critical, often ignored, component of future AI search.

You must audit your content against emerging ethical AI guidelines. This includes ensuring data provenance and transparency in content generation. It's about building trust, not just optimizing for clicks. Your brand's reputation for accuracy becomes a ranking factor.

To stay ahead, begin by standardizing your internal content tagging protocols across all media. Then, invest in tools that automatically generate and embed rich structured data for your entire media library. This isn't a "nice-to-have"; it's foundational for surviving the next iteration of AI search.

Common questions about AI website crawling

Traditional SEO isn't enough for AI crawlers anymore. You are playing a fundamentally different game, one where AI models process your content for understanding, not just keywords or backlinks.

AI crawlers like GPTBot or Perplexity’s agents build intricate knowledge graphs from your site. They don't simply rank blue links. They extract facts, relationships, and context to provide direct answers. This means your content is either a source of truth or it is ignored.

Many still ask if their existing SEO strategy covers them for this shift. It does not. SEO optimized for Google's traditional algorithm won't automatically grant you AI search visibility. The underlying mechanisms and desired outputs are distinct.

AI search visibility is the measure of how effectively an AI agent can understand, synthesize, and leverage your content as a source for direct answers, ultimately building your brand's authority. This helps ambitious brands establish themselves as recognized entities across multiple AI platforms.

This early pursuit of AI visibility is the cheapest moat available to brands right now. Getting ahead of the curve means staking your claim when competition is lower. Because once every brand catches on, the cost and effort to achieve prominence will skyrocket.

We see this as a critical opportunity. Our audits reveal many established brands are missing basic AI-first optimization. They're unknowingly leaving their content vulnerable to misinterpretation or complete exclusion from AI search results.

You cannot rely on Google's search algorithm alone to carry your brand into the AI era. You must actively optimize for a future where search is conversational, contextual, and often direct-to-answer. Build for the agent, not just the indexer.

What is the difference between Googlebot and GPTBot?

Googlebot and GPTBot process your content with fundamentally different objectives, leading to distinct impacts on your brand's digital visibility. While Googlebot traditionally indexes for rankings, GPTBot and similar agents index for knowledge synthesis.

This isn't a subtle difference. It dictates whether your content shows up as a link or becomes part of an AI's direct answer.

Here’s how these critical crawlers diverge in their approach:

| Feature | Googlebot (Traditional Search Indexing) | GPTBot (AI Knowledge Synthesis) |

|---|---|---|

| Primary Purpose | Indexes web pages to rank them in traditional blue-link search results. | Consumes data to train LLMs and build intricate knowledge graphs. |

| Indexing Goal | Discovers, categorizes, and evaluates content for relevance, authority, and ranking signals (keywords, backlinks, technical SEO). | Extracts facts, entities, relationships, and context for semantic understanding. Synthesizes information into concise answers. |

| Content Interpretation | Focuses on signals that determine a page's position in competitive keyword searches. | Prioritizes accuracy, comprehensiveness, and the ability to serve as a reliable source for generative AI responses. |

| Output | Search result links, snippets, rich results, leading users to your site. | Direct answers, summaries, conversational AI responses, inclusion in generative output, often keeping users on the AI platform. |

Googlebot works to connect users to your site via ranked links. Its purpose is finding the best page for a query.

GPTBot, however, focuses on finding the best information to answer a query directly. It doesn't care about a "page" as much as it cares about verifiable facts and data points. This extraction method means your site’s content can be consumed and repurposed without a direct click-through.

And the scale is significant. Some estimates suggest GPTBot activity can represent up to 20% of Googlebot's activity on certain sites. This means a substantial portion of your traffic, or potential visibility, is now dictated by how well AI agents can understand your brand's unique value proposition.

You must optimize not just for discovery, but for direct inclusion as a source of truth for these powerful new agents.